How AI Identifies Real Production Losses in 48 Hours: A 2026 Manufacturing Guide

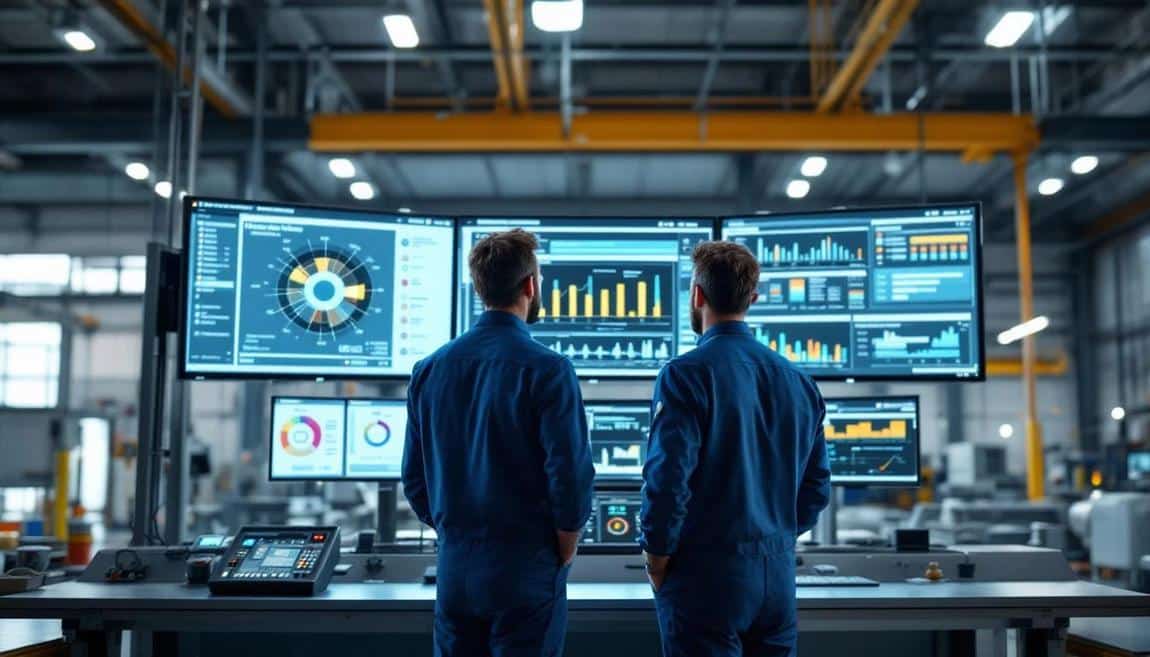

Most US manufacturing plants believe they understand their production losses. The maintenance manager has theories about which machines fail most. The shift supervisor knows which products run slow. The operations director has a quarterly Pareto chart. But when AI-powered OEE platforms install on these same plants and run for 48 hours, the data consistently surprises everyone — including the most experienced staff. The losses the team has been focused on are typically not the largest losses. The micro-stops that nobody tracks. The speed losses on “running well” lines. The quality issues hiding in product mix variations. The shift handover dead time. AI surfaces patterns that human pattern recognition misses because the data volume exceeds human cognitive capacity.

This article explains how AI-powered loss identification works in practice — what 48 hours of data analysis reveals, why human-driven analysis consistently misses the same categories of loss, and what the realistic AI capabilities are versus marketing exaggeration. The framing is honest: AI is not magic, and most of what is marketed as “AI manufacturing” in 2026 is statistical pattern detection rather than artificial general intelligence. But targeted statistical pattern detection at 1-second granularity across hundreds of machine signals genuinely does what humans cannot do at scale.

Why Human Analysis Misses the Real Losses

The mathematical reason human analysis misses real losses is data volume. A modern production line with sensors capturing run-state, cycle time, vibration, current, and temperature at 1-second granularity produces approximately 432,000 data points per machine per shift. A plant with 15 machines runs 6.5 million data points per shift. Human pattern recognition can effectively process about 50-100 events per shift before cognitive overload. The other 6.4 million data points remain uninspected. The loss patterns hiding in those 6.4 million points are typically larger than the patterns visible in the 100 events the team focused on.

The structural patterns missed by humans fall into four categories. Pattern 1: Micro-stops below the threshold of operator attention — 30-second to 2-minute stops that operators don’t bother logging because each individual one feels trivial. Cumulatively, these account for 15-30% of total downtime in most plants. Pattern 2: Speed losses on “running” lines — machines marked as “running” but actually running at 70-85% of nameplate speed for hours. Performance OEE shows the loss but most reporting systems hide it. Pattern 3: Quality losses correlated with product mix transitions — defect rates spike during the first 30 minutes after a product changeover, but the loss is attributed to “setup” rather than the actual root cause. Pattern 4: Shift handover dead time — 8-15 minutes of low productivity per shift change, accumulating to substantial weekly losses but invisible because each instance is short.

What 48 Hours of AI Analysis Actually Produces

The 48-hour window is sufficient for AI analysis to identify these structural patterns with statistical confidence. Specifically, the analysis produces: (1) Loss Pareto by category — ranked list of loss causes by total minutes lost, including the previously invisible categories. (2) Loss attribution to specific machines, products, shifts, and operators — not to assign blame, but to identify the conditions where losses concentrate. (3) Correlation analysis — which losses tend to cluster together (e.g., specific machine + specific product + specific shift). (4) Anomaly detection — events that deviate from the operational baseline by more than 2-3 standard deviations, suggesting equipment issues or process drift. (5) Predictive indicators — patterns in the 48 hours that, based on historical data from similar plants, predict equipment failures or quality issues in the following week.

The output is typically a 15-25 page report covering the analysis findings, plus the live dashboard showing real-time data going forward. The report ranks the top 5-7 actionable opportunities by estimated annual impact in dollars, allowing leadership to prioritize improvement initiatives by ROI rather than by intuition.

The Honest Limits of 48-Hour AI Analysis

What 48 hours of data does not tell you. Long-cycle patterns: weekly or seasonal patterns require longer observation windows. A plant that runs differently on Mondays vs Tuesdays needs 4-8 weeks of data to characterize that pattern. Rare event analysis: events that happen once a month (specific equipment failures, quality crises) cannot be analyzed in 48 hours. Cross-plant comparisons: meaningful benchmarking against similar plants requires anonymized data from at least 50-100 comparable facilities, which most platforms have but only after multiple months of customer base growth. Predictive accuracy beyond 7 days: predictive maintenance models trained on 48 hours of data have meaningful accuracy for 5-10 days; they need 3-6 months of operational data to predict reliably at longer horizons.

This honesty matters because the marketing for AI manufacturing platforms often suggests immediate transformative insight. The reality is more measured: AI in 48 hours identifies structural loss patterns reliably, predicts short-term issues with reasonable accuracy, and produces ROI-prioritized improvement opportunities. It does not replace months of operational learning or substitute for engineering analysis on complex problems.

Download the free asset

Instant download. No email confirmation needed.

The Five Categories Where AI Consistently Beats Human Analysis

Across 450+ deployments, AI-powered analysis consistently outperforms human analysis in five specific dimensions. Category 1: Aggregating thousands of small events into actionable patterns. Humans see individual events; AI sees patterns of events. Category 2: Multi-variable correlation analysis. Humans struggle with 3+ variable interactions; AI handles dozens. Category 3: Anomaly detection during normal operation. Humans notice anomalies during clear failures but miss subtle drift; AI catches drift before it becomes failure. Category 4: Loss attribution across confounding factors. Humans tend to attribute losses to the most recent visible event; AI uses statistical analysis to identify the actual driver. Category 5: Continuous learning from new data. Humans tend to update their mental models slowly; AI updates with each new shift’s data.

What Plants Should Do Differently With AI Analysis

The strategic implication of AI loss identification is that improvement programs should be re-prioritized based on data, not intuition. Most plants have a list of 15-25 improvement initiatives, ranked roughly by leadership preference. AI analysis typically reveals that 60-70% of those initiatives are addressing minor losses, while 30-40% of total loss is concentrated in 3-5 areas not on the list. The recommendation: run 48-hour AI analysis before launching the next improvement cycle, then use the data to re-rank the initiative portfolio. Plants that do this typically see 2-3x ROI on improvement investment versus plants that follow intuition-led prioritization.

0 Comments